Designing for Failure

10/17/2017 Category: Network Design

June’s British Airways IT outage, apparently caused by a power system failure, caused a stir among air travelers and the IT industry alike. Interestingly, the highly publicized August 2016 data center outage at Delta Airlines was also power system related. Why did a failure in a power system component bring down an entire data center? And why did they not have a functioning disaster recovery plan to fail over to a backup data center?

I’m a network engineer rather than a power systems engineer, so I’m even more curious about the July 2016 Southwest Airlines data center outage, attributed to the failure of a single router. My first reaction was that any CCNA should be able to come up with a network design that can survive a router failure. Southwest says that it was an unpredictable partial failure that wasn’t detected by backup systems. And when the crash happened, COO Mike Van de Ven said the failure caused cascading overloads and freezes. As with the Delta failure, their disaster recovery systems didn’t work.

The megabucks required to make everything, including the data centers themselves, redundant are always going to send the denizens of the boardroom into sticker shock. Those costs are tangible. A critical systems failure that brings the business to a halt and puts it on the front page of the Wall Street Journal is nebulous, at least until it happens.

But even when we have the funding for a redundant design, we often take too narrow of a view in the design. We select individual network components for survivability. We require redundant components. We look for MTBF and MTTR statistics. We analyze HA features. All of this is good for selecting network components up to a point, but it’s myopic when assessing overall network design.

We’re selecting for survivability, rather than designing for failure. That is, we neglect to work under the assumption that a component will fail.

Southwest CEO Gary Kelly said of their outage, “In 45 years we’ve never had a challenge like this one… We have to do everything we can to understand it and prevent it from ever happening again.” Those two statements are telling:

- Systems that don’t fail often enough can contribute to unpreparedness.

- Change happens at such a rate that just because your network can survive a failure this week doesn’t necessarily mean that it can survive the same failure next week.

Once we embrace the assumption that systems will fail, we are better prepared to design for those failures. We can even go a step further and actively promote failures in our network, to reveal shortcomings in the architecture and in our processes.

Unleashing the Simian Army

Conventional wisdom says that we never, ever do anything to our network that might risk a failure. No changes outside of maintenance windows. Tight change management oversight. Thorough regression testing.

Actually allowing a failure to happen is nuts.

Then along came Netflix, and an application called Chaos Monkey. First deployed circa 2010, Chaos Monkey randomly kills server instances, services, and, more recently, Docker containers. What is shocking to those unfamiliar with Chaos Monkey is that it terminates these servers and containers in the production network, not in some controlled environment and not at any scheduled time (other than not injecting failures on weekends and holidays or outside of normal business hours).

The philosophy behind Chaos Monkey is that “the best way to avoid failure is to fail constantly.” Applications change regularly, and just because an app can survive a failure in your infrastructure today is no guarantee that it can survive tomorrow. Nor can you fully anticipate every circumstance in which a failure takes place, so testing failure scenarios in a lab is inadequate.

The unpredictability, frequency, and randomness of the failures in real time and on the live network helps to insure that Netflix engineers have “seen it all” and can be more confident that the infrastructure is truly resilient.

Chaos Monkey is one of several similar applications created at Netflix, such as Conformity Monkey that kills systems not in conformce to defined best practices, and Security Monkey that kills instances that are in violation of defined security policies. Others include Doctor Monkey (weeding out ailing systems) and Janitor Monkey (cleaning up unused resources); together the suite of monkeys is called the Simian Army, and Netflix makes the applications available on GitHub for anyone who wants to use them.

Facebook applies the concept of intentional failures to a large scale with its Project Storm. in which entire data centers are taken offline to test the effects on user traffic. The idea came about after 2012’s Hurricane Sandy threatened two Facebook data centers on the East Coast. VP of Engineering Jay Parikh said, “It’s one thing to say, Hey, I gathered some data, I put this in some Excel model… But if you don’t actually verify, then what are you going to do with an actual real-life drill with live traffic to prove that is your actual limit?”

These approaches turn the old adage, “If it ain’t broke don’t fix it,” entirely on its head by saying, “Let’s break stuff to find out what needs fixing.” And it all ties back to the several airline industry outages I cited, not to mention countless less publicized outages that occurred because a component failure had unanticipated consequences. Complex systems have too many system interactions to confidently predict every failure scenario, and complex systems – along with the applications they support – change frequently. Unless failures are happening regularly, you can’t know for sure whether your recovery plans are reliable.

Don't Build Snowflakes

“Snowflake” has become an epithet in uncivil political comments on social media, implying that a person is overly fragile and their convictions easily destroyed. That sobriquet might apply to networks also: Fragility is to be avoided in every part of a network. So in that sense, certainly, don’t build snowflakes.

But in network design, “snowflake” has another meaning. Just as every snowflake is thought to be unique, a snowflake network design is one-of-a-kind. This isn’t a good thing, because one-of-a-kind networks mean one-of-a-kind problems and unpredictable failure characteristics.

Whether you are designing data center pods, extended campus networks, branch offices, service provider POPs, or regional networks, you should aim for modular designs that can be replicated over and over throughout the network. The advantage of this approach is that if you understand one module in the design, you should understand all modules in the design. Most importantly, as failures arise and are remedied in one module you can apply the learned lesson to all other modules, and you are less likely to encounter unique failures.

One-of-a-kind network components lead to one-of-a-kind failures.

Sticking to a modular, cookie-cutter approach to design is not as easy as it sounds. I recently helped redesign a regional service provider network. Although the network was small, it supported a gamut of services: Business and residential Internet, business VPNs, business and residential voice, cellular, live and on-demand video entertainment, and managed security. So there were a lot of moving parts.

A principle agreed to in the initial engineering meetings was to control complexity through consistent, repeatable modular design. “One-offs” were prohibited. As the project progressed, though, this agreement became more difficult to follow: There were multiple cases in which a one-off segment would have been easier to build than a standardized module. Temptations loomed, many contentious engineering meetings were held, and in the end consistency prevailed.

Snowflakes are hard to resist, but it can be done.

Limiting the Blast Radius

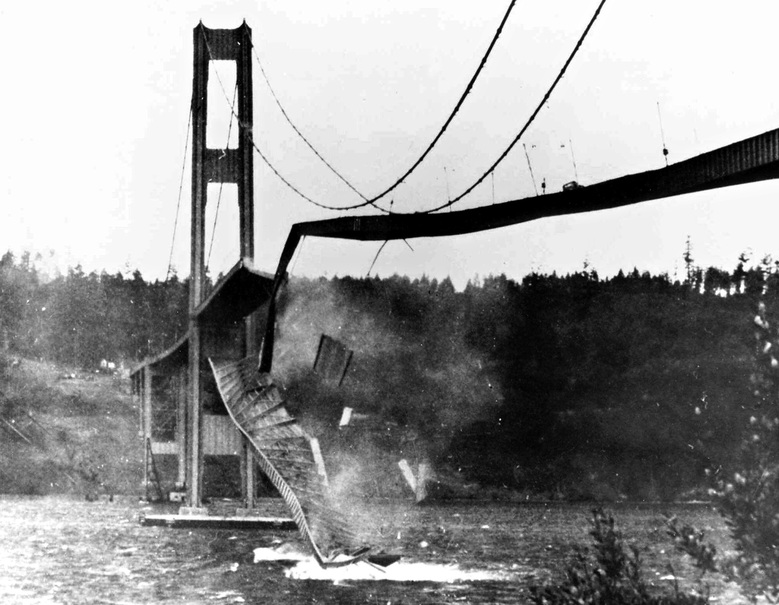

An enduring myth about the origins of the ARPANET, the predecessor of the modern Internet, is that it was designed to survive a nuclear attack. Larry Roberts, the principal architect of ARPANET, was certainly interested in network survivability, but his motivation was unreliable links and nodes, not Soviet bombs. He wanted a network that could survive cut cables, not radioactive craters.

Hence data packetization and dynamic routing were created to route around unavailable parts of the network.

The challenge for network architects that remains to this day is to limit the extent of a failure’s effects. This is a failure domain. Russ White defines a failure domain thus:

A failure domain is any group of devices that will share state when the network topology changes.

Failure domains are pretty simple to delineate at L1 and L2. A broken physical link defines a failure domain for anything depending on that link. Whether the domain extends to all systems downstream of the link depends on whether there are functions in place to detect the failure and reroute traffic to another equally good link. An L2 failure domain is also pretty simple. For example, an Ethernet broadcast domain – either an Ethernet segment or a VLAN – usually also delineates a failure domain.

At L3 things become more difficult to define, but usually follow the patterns of information sharing. Inadvertently leaking full Internet routing tables from BGP into OSPF – something that happens with shocking regularity even in large service provider networks – is a good example both of defining a failure domain and trying to control its impact. 600,000 or so routes will bring an OSPF process to its knees, if not cause the entire router to crash. And due to the nature of OSPF LSA flooding, correcting the problem is not as simple as stopping the redistribution leak. You can be playing whack-a-mole within the OSPF domain for hours or even days.

And with all due respect to Russ’ definition, I submit that there are other factors that can define a failure domain beyond the effects of topology changes on L2 or L3 state. Think about the data center power failures cited at the beginning of this article. The blast radius of those failures was, from a network perspective, an entire data center. From a business perspective, due to inadequate or nonexistent disaster recovery, the failure domain encompassed the entire airline operation.

So failure domains can overlap both within and between network layers, and can even encompass dependent systems such as power and environment. Identifying and limiting their scope and interactions can be a complex exercise, and if your network is not experiencing failures regularly it might also be an exercise in prognostication.

Swallowing Failures in Small Bites

I learned to ski in my early twenties. My instructor told me, “If you’re not falling down you’re not learning anything.” What he meant was that you have to push the limits of your current capabilities if you want to improve, and that means pushing yourself into frequent falls.

Little failures.

Failures are learning experiences. Attempting to run a failure-free network teaches you nothing about the behavior of your network. You might spend most of your ski trip carefully wedging down the beginner slopes and staying upright, but inevitably you’re going to hit that patch of ice or cross paths with an out-of-control snowboarder, and not have the skills to deal with it.

For every segment or system you add to your network, assume that it’s going to fail. Then ask yourself how that failure is going to effect your operation, what the extent of the failure will be, and how you’re going to recover from the failure. If you’ve designed with confidence, test your assumptions by inducing failures and measuring the results.

If you want to learn more, I highly encourage you to read Navigating Network Complexity: Next-Generation Routing with SDN, Service Virtualization, and Service Chaining by Russ White and Jeff Tantsura. It revisits networking basics from a new perspective that analyzes state, speed of change, and interaction surfaces to control complexity. The last is particularly instructive, looking at the depth and breadth of the interaction of two systems. I recommend the book both to beginning networkers and to experienced architects needing a fresh perspective on design practices.